Time:2025-08-26Reading:902Second

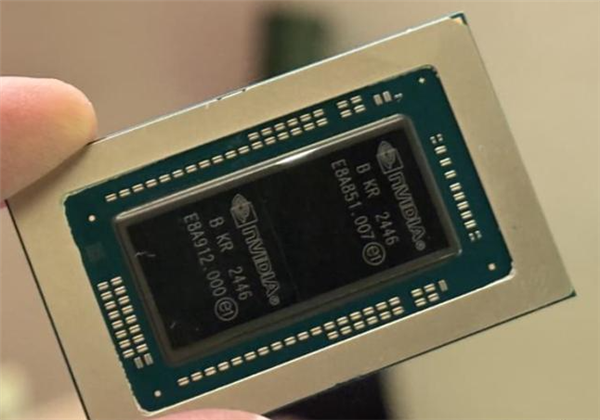

On August 26th, Fast Tech announced that NVIDIA has officially released a new generation of computing platform designed specifically for physical AI and humanoid robots - NVIDIA Jetson Thor, which CEO Huang Renxun called the "ultimate supercomputer that opens the era of physical AI and general-purpose robots".

Jetson Thor integrates Blackwell architecture GPU, 14 core Arm Neoverse central processing unit, and is equipped with 128GB of video memory, with a video memory bandwidth of 273 GB/s.

At FP4 and FP8 precision, their AI computing power reaches 2070 TFLOPS and 1035 TFLOPS respectively, which can efficiently accelerate the inference and operation of generative AI and large-scale Transformer models on the edge side.

This chip has been specifically optimized for multi AI task flow, dedicated to promoting the development of visual intelligent agents and complex robot systems. Compared with the previous generation Jetson Orin, Jetson Thor achieves a 7.5-fold improvement in AI computing performance, a 3.5-fold increase in energy efficiency, a 3.1-fold increase in CPU performance, and a significant 10 fold increase in I/O throughput. Compared to products from ten years ago, its overall AI performance has increased by up to 7000 times.

Jetson Thor is fully compatible with Nvidia's cloud to edge software stack, supports a variety of mainstream AI frameworks, and can be used in conjunction with the production model of many enterprises, including ByteDance DeepSeek、 Alibaba Qwen, Google Gemini, Meta, Mistral AI, OpenAI, and Physical Intelligence (π), among others.

In addition, the platform can seamlessly integrate with NVIDIA Isaac robot platform, GR00T humanoid robot basic model, Metropolis visual AI platform, and Holoscan real-time sensing processing system.

In terms of real-time control, Jetson Thor demonstrates outstanding performance: it can process 16 sensor inputs in parallel, and when running the Llama 3B and Qwen 2.5 VL 3B models, the time to generate the first token is within 200 milliseconds. Subsequent token generation only takes 50 milliseconds, which means it can output more than 25 tokens per second, and its responsiveness is significantly improved compared to its predecessor.